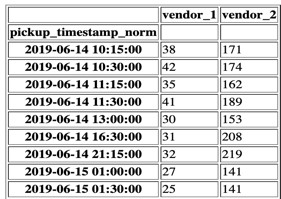

Common table expressions allow you define tables at the top of your query rather than in-line as sub-queries. Playbook reports use common table expressions to help you format your data to each query. These additional details are noted in the guide for each report. Some reports only require an events table, while others might require additional information. One column should be a user_id, which matches to the user_id in the first table one column should be the event_name, which is how you identify which action a user took and one column should be called occurred_at, and is a timestamp of when this event took place. Events can be logins, purchases, clicks, screen views, or any other action taken by users. This table should include on row per event. Finally, this table should have one row per user.

This represents the first time that user signed up, their first purchase, or any other initializing event.

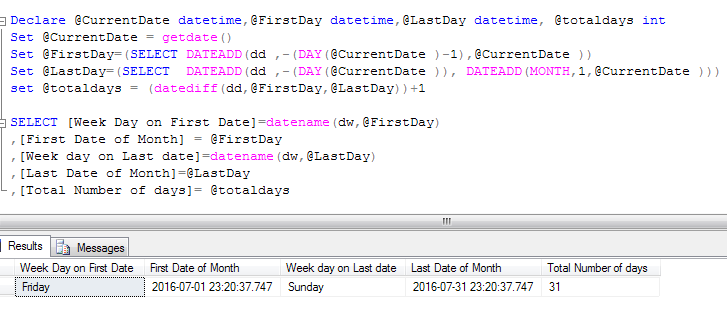

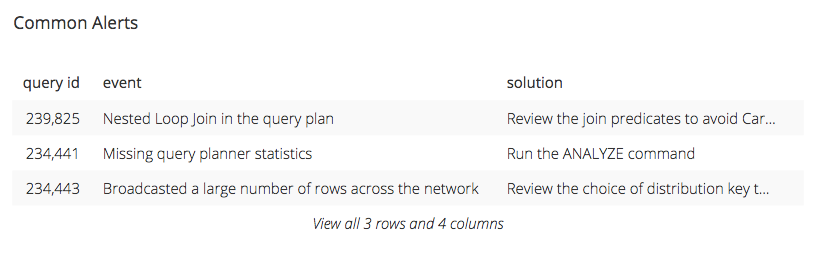

The second column should be an activated_at timestamp. One column should be a user_id, which is a unique identifier for that user. This table is expected to have one row per user (or customer or account), and typically has two columns. The people table can represent users, customers, accounts, or any other type of identity. Most reports use only these two tables, one for people and one for events. The Playbook reports are built on top of a common schema of people and events. The three sections below outline what data is required, how to format reports to fit that data, and how to edit reports to make them compatible with different types of databases. This column in particular is useful in diagnosing whether or not the query is the problem or the WLM Queue could use some review.Īll of this information in a vacuum isn’t likely enough to do a full diagnosis of your Amazon Redshift WLM Queue Performance but it will help you analyze the queries being sent to your cluster.This document outlines how to use Mode Playbook with your data. We added in one additional column into the table and that was percent_wlm_queue_time which we use to determine how much of the time this query spent executing was spent waiting in line in the WLM Queue. ⋅⋅* It also tells us the total time this query stayed in the WLM’s queue and how long it took to actually execute, which are then also used to determine the entire time this query took to get from Chartio to the Amazon Redshift Instance and return the data you were looking for. ⋅⋅* The service class and slot class which refer to the Workload Management (WLM) configuration ⋅⋅* Some of the queries syntax so we can tell what query this is We got this query directly from the webpage I mention above and it tells us quite a bit about this Redshift instance’s performance. starttime >= DATEADD ( day, - 7, CURRENT_DATE ) ORDER BY w. queue_start_time >= DATEADD ( day, - 7, CURRENT_DATE ) AND w. total_exec_time ):: float ) * 100 AS percent_wlm_queue_time FROM stl_wlm_query w LEFT JOIN stl_query q ON q. total_exec_time ) / 1000000 AS total_seconds, ( w. total_exec_time / 1000000 exec_seconds, ( w.

total_queue_time / 1000000 AS queue_seconds, w. In my previous life as a Customer Success Engineer that site was very helpful getting our clients the answers they needed when they wrote into us regarding Redshift Performance issues. How to Use Amazon Redshift Diagnostic Queries Determining Queue Times

Luckily Amazon Redshift shares many insights into query tuning and also provides us with diagnostic queries. This analysis can help you determine if some of your queries can be eliminated due to redundancy or if your queries can be tuned to increase performance. Things may not be that dramatic every day, but when you are experiencing slow queries, or even queries failing to load you will want to do some analysis of your Amazon Redshift instances. Time, size, speed, these are all concerns that live at or near the top of the list of concerns for any computer user especially in this day and age where the difference between winning and losing in business could be, in extreme cases, milliseconds or nanoseconds. These questions vary greatly, but a theme that is often discussed is query tuning. Utilizing an Amazon Redshift data source in Chartio is quite popular, we currently show over 2,000 unique Redshift Source connections and our support team has answered almost 700 tickets regarding Amazon Redshift sources. Use these queries to determine your WLM queue and execution times, which can help tune your Amazon Redshift Cluster.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed